Relational Agents: the use and testing of avatars for HCI

Relational Agents (RAs) are computer constructs designed to interact with humans in a way that promotes the long-term formation of social and emotional bonds. Integrating social psychological principles of relationship building such as empathy, shared self-disclosure and emotional feedback into the RA interface helps to establish these bonds.

Relational agents

Relational agents are generally created with an anthropomorphic (i.e. human-like) form that may be more or less realistic, varying from cartoon-like two-dimensional drawings to animated three-dimensional models developed with advanced graphics software. This type of model, or avatar, is known to most as a character representation found in third-person video games in which the game player character is visible.

However, RAs are usually limited to frontal views from the shoulders or neck up due to constraints imposed by limits on computer processing power and the simulation capabilities of artificial intelligence algorithms.

The video shown here was created as a final project for a Motion Capture class at New York University, and is intended to illustrate the potential uses of RAs as well as methods for testing the acceptance of such agents by their target audience.

MoCap Final Overview from Crystal Butler on Vimeo

Promising areas of application for RAs

One of the most promising areas of application for RAs is in the provision of health care diagnostics and therapy. Researchers at the Relational Agents Group of Northeastern University in Boston, Massachusetts, USA have been developing avatars for staff and patient support services in hospitals and the home [1].

Mental health care may also improve with the deployment of computer-based agents, especially for underserved populations in rural areas and impoverished regions where access to providers is inadequate. Scientists - Lisetti and colleagues have been working on a behavioral change system that targets problems more likely to be found in these groups, such as obesity and the abuse of legal and illegal drugs [2].

The avatar featured in the video link for this post is intended to target a specialized domain of mental health: caregiver sensitivity. Healthy emotional development during a child’s first two years of life is crucial to establishing a solid foundation for future wellness, and is largely determined by attachment formation based on caregiver-infant interactions.

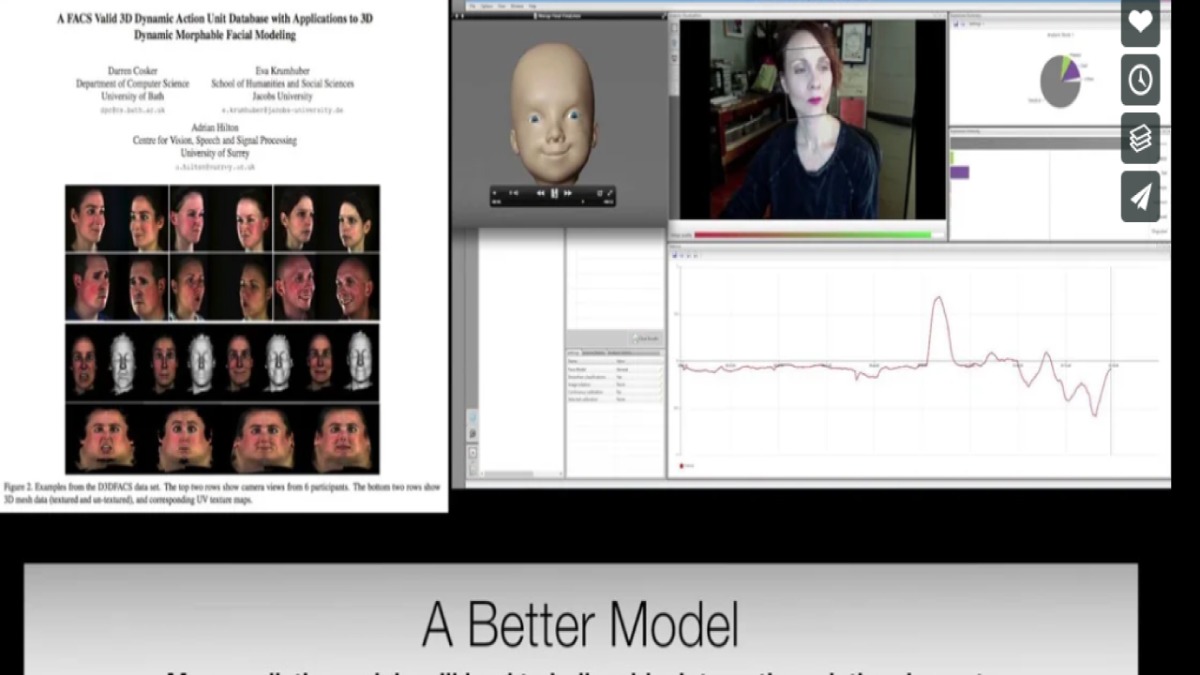

Since communication during this period of a child’s life is nonverbal, sensitivity and responsiveness to cues such as facial expressions are key to providing good childcare. The video demonstrates how a simulated infant could be created for assessing whether a caregiver responds appropriately to designated facial expressions.

RA's and facial expression recognition

Other fields that could effectively employ RAs include teaching and security. Facial expression recognition has been researched at the Machine Perception Laboratory at the University of California, San Diego, as a means of interpreting the perceived difficulty level of a lecture [3]. A computerized tutoring system with an interactive agent could respond empathetically to facial expressions correlated with student distress and ask whether the student needs, for example, to review the material again or get additional help.

Facial expressions are already being employed as one of several biometric measures used to evaluate truthfulness and detect threat in a test of an RA system at the United States border [4]. The creators built their software into kiosks that display a simulated human face, which asks the viewer a series of questions based on those used by border patrol agents. It is meant to elicit the same automatic physical indicators of deception displayed when talking with a real person, such as changes in blink rate, stance, or alterations in vocal stress.

Influencing factors

A crucial step in establishing the effectiveness of any RA interface is testing acceptance by the target audience, or intended users. Many factors can influence how individuals react to computer agents, including comfort with technology, ease of use, the avatar’s apparent race, gender, and age, cultural appropriateness, and avatar personality type.

As indicated by the work of Bickmore and colleagues [5], a contextually correct, well-designed RA may even be preferred to its human counterpart. A product such as FaceReader [6] could be useful in evaluating user responses to RAs by providing information on their emotional reactions and comparing them to those seen in real life, face-to-face communication.

By Crystal Butler

Master’s of Computer Science Candidate, New York University

VicarVision Intern, Amsterdam NL

- Relational Agents Group: http://relationalagents.com/

- Lisetti, C.; Yasavur, U.; de Leon, C.; Amini, R.; Rishe, N.; Visser, U. (2012). Building an on-demand avatar-based health intervention for behavior change. Proceedings of FLAIRS 2012.

- Whitehill, J., Bartlett, M., & Movellan, J. (2012). Automatic facial expression recognition for intelligent tutoring systems.

- Higginbotham, A. (2013). Deception is futile when big brother’s lie detector turns its eyes on you. Wired. Retrieved from http://www.wired.com/threatlevel/2013/01/ff-lie-detector

- Bickmore, T., Mitchell, S., Jack, B., Paasche-Orlow, M., Pfeifer, L., & O’Donnell, J. (2010). Response to a relational agent by hospital patients with depressive symptoms. Interacting with Computers, 22, 289-298.

- FaceReader: https://www.noldus.com/facereader

Get the latest blog posts delivered to your inbox - every 15th of the month

more

Effective security screening and airport processes

Do you know this feeling? You are impatiently waiting in line for the security screening. There is a lot going on at an airport and the security screening is just one of the activities.

Human-robot interaction in remote friendships and family relations

Don’t you miss the touch of a loved-one when they are far-away? Skype and a number of other communication channels are great solutions to talk and even video chat when you are apart.