Simple tests to complete setups

EthoVision®

Whether you're studying rodents, fish, or other species, EthoVision gives you precise tracking, advanced behavior detection, and reliable, high-quality data—all in one powerful solution that scales with your goals.

- Effortless setup – automated animal detection saves time

- Built-in test templates – quickly start your experiments

- Starts at $3,495 (USD), for all your standard tests

Latest innovations in EthoVision®

In EthoVision 19 you will find various quality of life improvements

and major improvements to multi-subject tracking. Four new social behavior parameters were added including: following, leaving, approach and social contact.

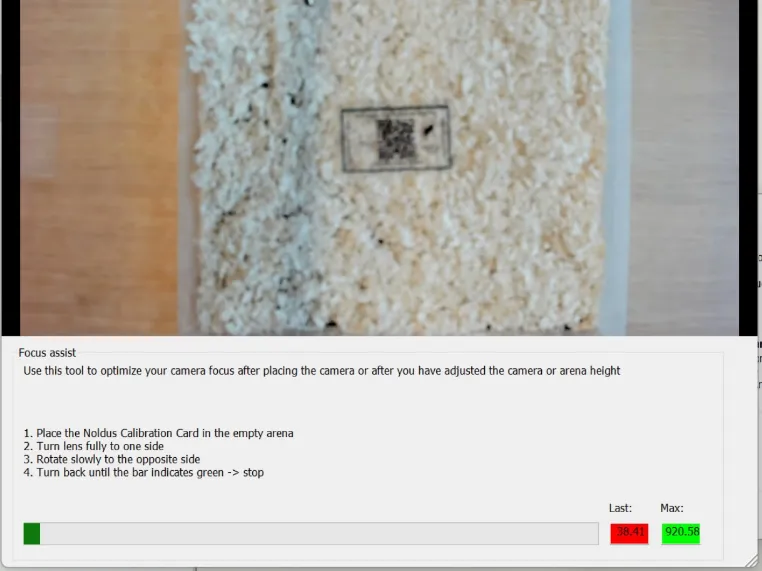

With the NEW Noldus Calibration Card focussing your camera and calibrating your arena settings

happens with the click of a button. Furthermore, EthoVision AI models are now better at

distinguishing your animals as they interact. This means your data is ready within seconds of

finishing your recording instead of minutes.

The tracking software is extraordinary! Keep the good work going. You make our lives easy and each new version of EthoVision is testimony to the fact that the company is frankly put; the best at its game in the world. We appreciate this very much!

DE WET WOLMARANS, PHD

NORTH-WEST UNIVERSITY, SOUTH AFRICA

Perfect tracking through deep learning technology

EthoVision XT uses advanced

deep learning technology to accurately detect and track rodent body

points.

The neural networks process data in layers, recognizing basic details like

color, as well as more complex features like the tail.

This ensures stable detection of key points—nose, body center, and tail base—resulting in

highly precise tracking for tests like novel object

recognition and social preference testing. Deep learning works seamlessly with

either evenly colored rodents or patterned rodents such as Long-Evans rats.

*Note that using this technology requires a GPU for the necessary computational power. Visit the setup page to learn more.

Your essential research toolkit

Free e-book

Want to know more about behavioral neuroscience? Check out our free downloadable e-book where we explain from A to Z why and how we measure certain behavior in rodents, what they translate to and how you can you put EthoVision to the most advantage in your research.

Download the e-book for freeFree buyer's guide

Want to know more about the perfect video tracking setup? Already have the basics down and looking for the best solution for your behavioral research? Our video tracking buyer’s guide lays out the groundwork for you and compares all the options out there on the market.

Download the Buyers guide for free

EthoVision XT applications

Track in multiple arenas

Increase your throughput by analyzing animal behavior in four, eight, or even sixteen open fields at the same time, or track zebrafish larvae in a 96-well plate. EthoVision easily defines start and stop conditions for each arena individually or collectively.

Study social interaction

Track multiple subjects in a single arena. The powerful deep-learning algorithm tracks two rodents with minimal marking. This allows you to track socially housed animals while preveting swaps, keeping your data clean and of a high quality.

Automatic behavior recognition

Automation in behavioral recognition is a crucial step towards advancements in biomedical, genetic, and molecular research. EthoVision accurately and reliably recognizes a list of ten rat or mouse behaviors, saving you time in annotation and reducing observer bias.

Control external equipment

Control devices such as lights, pellet dispensers, doors, etc. Or create your own operant conditioning experiment. EthoVision can also control optogenetic stimulation or create markers in your electrophysiological recording equipment.

Integrate external data

Physiological data, such as heart rate or body temperature, and other external data, like vocalizations, can add valuable information to complement your behavioral data. EthoVision integrates all these data, so you can visualize, select, and analyze all your data in sync.

Control your data

Quality assurance & GLP compliance

The Quality Assurance protects your data and creates log files of everything for that project. This mode is compliant with 21 CFR Part 11, the regulations on electronic records and electronic signatures for studies conducted under Good Laboratory Practice (GLP).

EthoVision: Also for zebrafish

Video tracking is the ideal tool for zebrafish behavior studies.

Social interaction tracking, anxiety-like behavior, exploration, swimming patterns,

or just general activity, all is easily measured in both adults and larvae with EthoVision.

You can use EthoVision as a standalone solution for video tracking (zebra)fish, or pair with the DanioVision observation chamber,

a complete system designed for high-throughput tracking of zebrafish larvae.

How do I use EthoVision?

Start your experiment in EthoVision from scratch or save time by using one of the predefined templates. Starting an experiment from scratch is also easy. Answer a couple of questions and EthoVision adjusts all the settings for you. Click the button below to learn how to set up your system.

All I need to know about EthoVision

EthoVision has been cited in tens of thousands of publications, in a great variety of studies, from cognition, learning and memory to emotional and social behavior, stress, anxiety, fear and many more. Click the button below for more in-depth information on EthoVision.

What are the benefits of EthoVision?

EthoVision is made for researchers, by researchers. It saves time and gets you the results you want (and need) with ease. Designed to be at the core of your lab, EthoVision offers integration of data and automation of behavioral experiments with any animal. Click the button below to learn about the benefits of EthoVision XT.

What can I use EthoVision for?

EthoVision is a thoroughly validated video tracker which can be used for rodents, fish, insects and other animals. It also serves as the engine for our hardware solutions such as DanioVision and the PhenoTyper; flexible observation cages to take your research even further.

Ready to take the next step?

We’re passionate about helping you achieve your research goals. Let’s discuss your project and find the tools that fit.

Contact us