FaceReader

Resources

Download our white papers

FaceReader product videos

FaceReader Classifications Demo

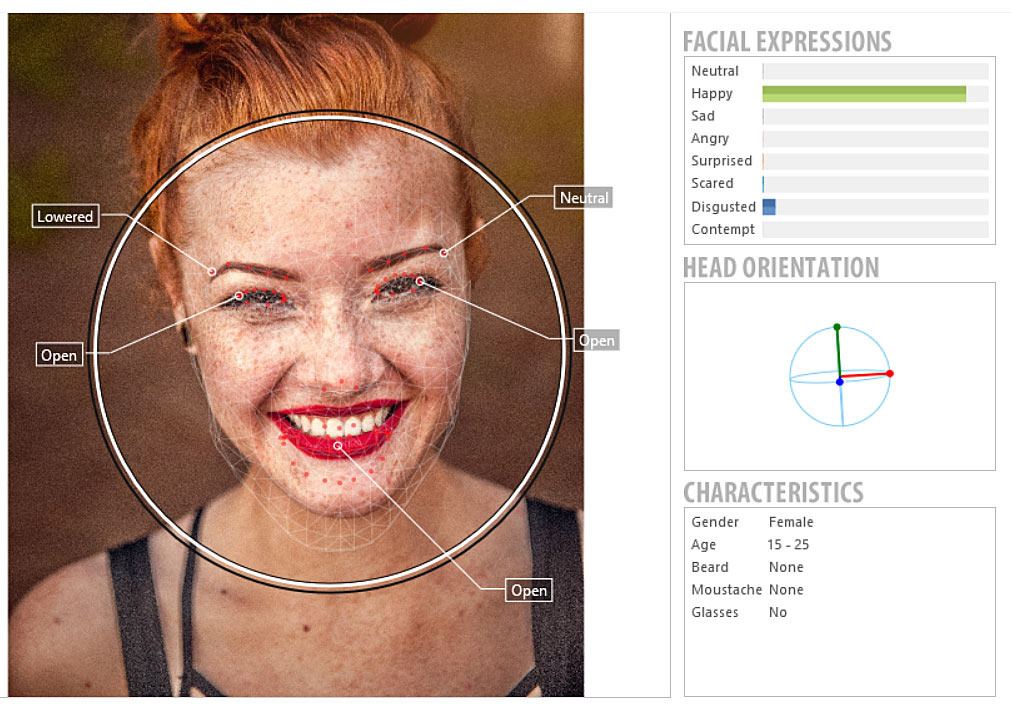

Discover the realtime modeling capabilities of FaceReader.

Affective attitudes in FaceReader

Using the Action Unit Module, Facereader is able to detect the affective attitude 'confusion'.

Baby FaceReader

Baby FaceReader enables you to recognize the facial expressions of an infant automatically!

Download our free product overviews

What does your face say?

Curious what emotions your face shows? Upload a photo here and our FaceReader software will test it for emotionality. The better quality the picture, the better the results! To get the best results, test only photos where your face is clearly visible. The lighting should be sufficient as well.

Try it now and find out what your face says.

Customer success stories

How to measure emotions in the Uses and Acceptability Lab

Jean-Marc Diverrez, bcom Institute of Research and Technology

Sensory evaluation - Food science & technology

Prof. Susan Duncan, Virginia Tech

Social Media Lab: How people make sense of data

Tiffany Andry, University of Louvain

References

- Dupré, D. et al. (2020). A performance comparison of eight commercially available automatic classifiers for facial affect recognition. PLoS ONE, 15(4):e0231968. https://doi.org/10.1371/journal.pone.0231968.

- Märtin, C., Bissinger B.C., & Asta, P. (2021). Optimizing the digital customer journey - Improving user experience by exploiting emotions, personas and situations for individualized user interface adaptations. Journal of Consumer Behavior, 1-12. https://doi.org/10.1002/cb.1964.

- Meng, Q. et al. (2020). On the effectiveness of facial expression recognition for evaluation of urban sound perception. Science of The Total Environment, 710, 135484, ISSN 0048-9697. https://doi.org/10.1016/j.scitotenv.2019.135484.

- Rogers, J. (2022). Ethnicity & FaceReader 9 - A FairFace Case Study. Volume 2 of the Proceedings of the joint 12th International Conference on Methods and Techniques in Behavioral Research and 6th Seminar on Behavioral Methods, held online, May 18-20, 2022. www.measuringbehavior.org. Editors: Andrew Spink, Jaroslaw Barski, Anne-Marie Brouwer, Gernot Riedel, & Annesha Sil (2022). https://doi.org/10.6084/m9.figshare.20066849. ISBN: 978-90-74821-94-0

- Stöckli, S. et al. (2018). Facial expression analysis with AFFDEX and FACET: A validation study. Behav Res Methods, 50(4), 1446-1460. https://doi.org/10.3758/s13428-017-0996-1.

- Talen, L. & den Uyl, T.E. (2021). Complex Website Tasks Increase the Expression Anger Measured with FaceReader Online. International Journal of Human–Computer Interaction. https://doi.org/10.1080/10447318.2021.1938390.

Recent blog posts

Measuring creativity at the GrunbergLab

In the GrunbergLab in Amsterdam, I read Arnon Grunberg’s upcoming release. Two researchers hooked me up: sensors on my left hand, rib, chest, and of course the famous head cap to measure my brain activity.

From start to finish: decreasing abandonment when self-designing a product

Research shows that we like to design your own products, but often abandon the process before we're done. Does it help to provide feedback, and if so, what type of feedback works best?

English

English German

German French

French Italian

Italian Spanish

Spanish Chinese

Chinese