Measuring experiential, behavioral, and physiological outputs

In a romantic relationship, it is undoubtedly important to show support when one’s partner shares his or her accomplishments and positive life events.

Posted by

Published on

Fri 26 Sep. 2014

In a romantic relationship, it is undoubtedly important to show support when one’s partner shares his or her accomplishments and positive life events. Retelling and reliving such events can evoke certain emotions, but the listener’s response often impacts the storyteller’s attitude as well. To simulate this process, researchers Samuel Monfort and colleagues created a structured social interaction task with couples: 1) positive event of one partner, 2) disclosure of the event to the other partner, and 3) clear communication of a capitalization response that ranged from actively destructive to enthusiastic, supportive and constructive.

Integrating multiple modalities

Monfort and his team set out to capture a full range of emotional responses and therefore measured experiential (subjective feelings), behavioral (facial-motor activity), and physiological (skin conductance) outputs. Behavior and physiology are closely linked; as Patrick Zimmerman and his colleagues explain, more and more researchers now see the benefit of combining behavioral observations with other types of data such as heart rate, blood pressure or eye movements. By integrating multiple modalities, researchers achieve a more complete picture of the phenomena being studied.

Observing couples

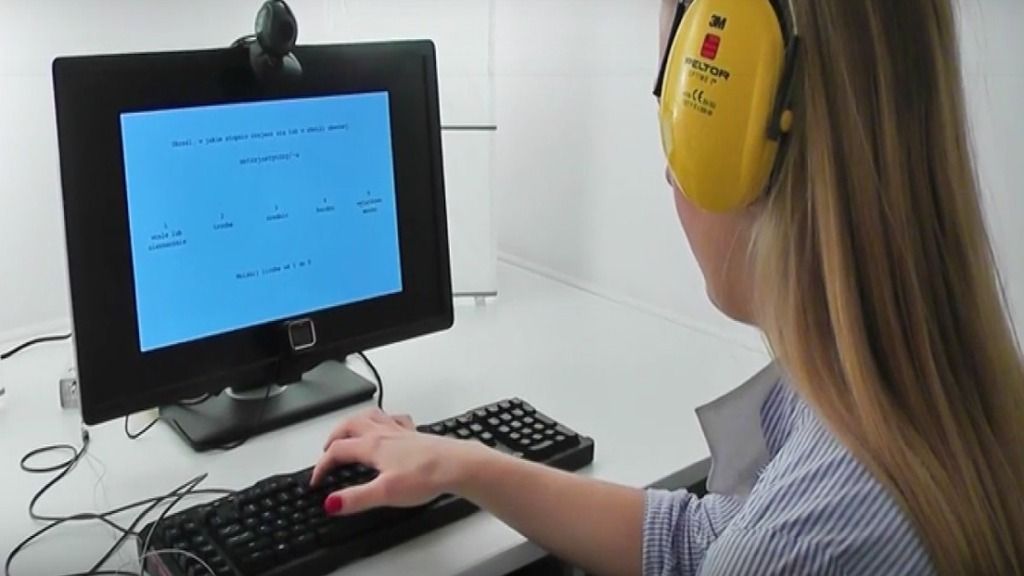

A total of 69 couples participated in Monfort’s study. During lab interactions, couples were separated into cubicles with no eye contact or talking. Each experiment consisted of partner A successfully completing a computer task, then sharing this success with partner B. Partner B could choose from four possible responses: active-constructive (e.g., ‘‘Wonderful! You did a great job!’’), passive-constructive (‘‘Ok. Good.’’), active-destructive (‘‘I bet the task wasn’t very hard’’) or passive-destructive (‘‘Not much happening here’’).

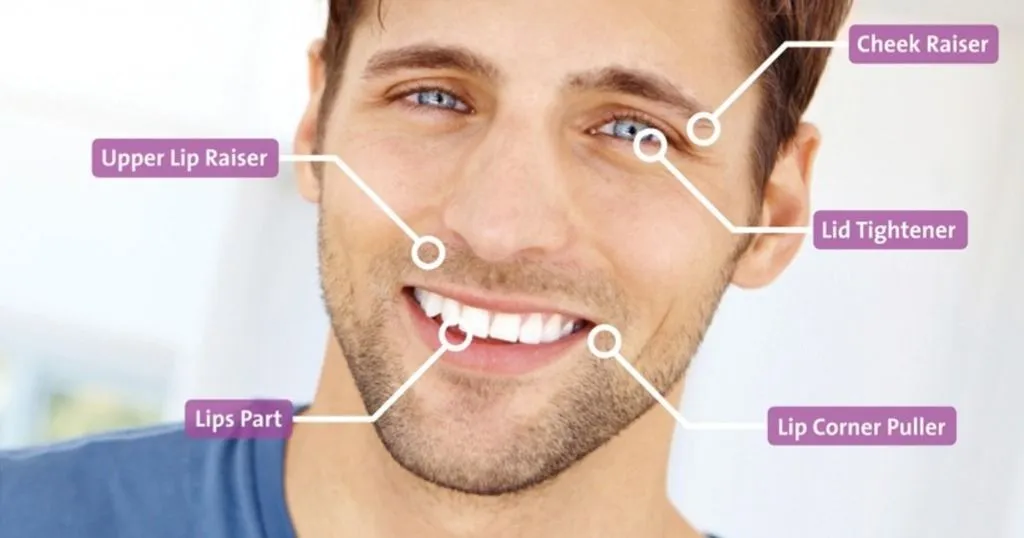

For each individual, Monfort et al. used FaceReader software to analyze his/her facial expressions. They explain that this software automatically calculates a compound index of facial behavior, i.e., valence. The valence is determined as the intensity of the happy expression minus the intensity of the negative emotion (sadness, fear, anger, and disgust) with the highest intensity at a given moment. Monfort also measured skin conductance via a pair of electrodes taped on digits II and III of the non-dominant hand.

Partner's response

It was hypothesized that supportive comments such as “Great job” or “Wonderful” would result in increases in happy facial expressions and positive emotions, decreases in sympathetic nervous system activity (skin conductance response), and fewer negative emotions in both partners. While Monfort et al. did not see any clear direct physiological effects, their results on the subjective and behavioral measurements suggested that supportive capitalization responses did indeed lead to greater positive emotion and less negative emotion (felt emotions) in both partners, and an increase in positive facial expressions on the part of the receiver.

Effects were expected to be stronger for the person receiving supportive capitalization responses than for the giver, which proved to be true in this case. In other words, emotions were most greatly impacted when a person received verbal support. Monfort’s sample only involved couples with high relationship satisfaction, but it would be interesting to duplicate this experiment using couples with low relationship satisfaction, to test whether these findings can be extended to individuals in different types of relationships.

References

- Zimmerman, P.H.; Bolhuis, L.; Willemsen, A.; Meyer, E.S.; Noldus, L.P.J.J. (2008). The Observer XT: a tool for the integration and synchronization of multimodal signals. In Proceedings of Measuring Behavior 2008 (Maastricht, The Netherlands, August 26-29, 2008), 125-126.

- Monfort, S. S., Kaczmarek, L. D., Kashdan, T. B., Drążkowski, D., Kosakowski, M., Guzik, P., Krauze, T., Gracanin, A. (2014). Capitalizing on the success of romantic partners: A laboratory investigation on subjective, facial, and physiological emotional processing. Personality and Individual Differences, 68, 149-153.

"Project funded by National Science Center (Poland). Grant nr N N106 0167 40 awarded to Lukasz D. Kaczmarek (Adam Mickiewicz University).".

Image and movie courtesy - Monfort, S. S., Kaczmarek, L. D., Kashdan, T. B., Drążkowski, D., Kosakowski, M., Guzik, P., Krauze, T., Gracanin, A.

Related Posts

How botulinum toxin affects facial expression

How to use FaceReader in the lab